Mastering Neural Network Implementation in TensorFlow: A Comprehensive Guide

Introduction: Neural networks have emerged as powerful tools for solving complex problems across various domains, including image recognition, natural language processing, and reinforcement learning. TensorFlow, an open-source machine learning framework developed by Google, provides a flexible and scalable platform for building and training neural networks. In this comprehensive guide, we’ll explore the fundamentals of implementing neural networks in TensorFlow, from defining network architectures and layers to training models and deploying them in production. Whether you’re new to TensorFlow or looking to deepen your understanding of neural network implementation, this guide will equip you with the knowledge and tools to build and train sophisticated neural networks for a wide range of applications.

- Introduction to TensorFlow: TensorFlow is a powerful and flexible machine learning framework that enables developers to build and train deep learning models with ease. Developed by Google Brain, TensorFlow provides a rich set of tools and abstractions for implementing various machine learning algorithms, including neural networks. TensorFlow’s core abstraction is the tensor, a multi-dimensional array that represents data flowing through a computational graph. The computational graph defines the operations performed on tensors, allowing for efficient execution on CPUs, GPUs, or TPUs.

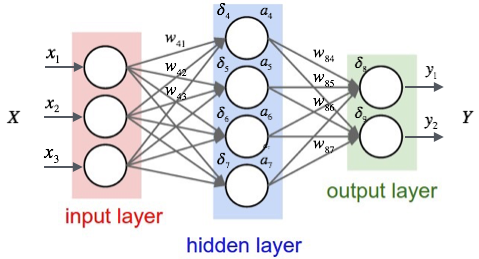

- Defining Neural Network Architectures: The first step in implementing a neural network in TensorFlow is defining its architecture, including the number of layers, types of layers, and connectivity between layers. TensorFlow provides a high-level API, known as Keras, for building neural networks with minimal boilerplate code. Using Keras, you can easily create sequential or functional models by stacking layers on top of each other. Commonly used layers include dense (fully connected), convolutional, recurrent, dropout, and normalization layers. You can customize each layer by specifying its activation function, regularization, initialization, and other parameters.

- Building the Computational Graph: Once you have defined the architecture of your neural network, TensorFlow constructs a computational graph that represents the flow of data through the network. The computational graph consists of nodes, which represent operations, and edges, which represent tensors flowing between operations. TensorFlow uses lazy evaluation to build the computational graph, meaning that operations are not executed immediately but are added to the graph for later execution. This allows TensorFlow to optimize the execution of operations and perform automatic differentiation for training neural networks.

- Training Neural Networks: Training a neural network involves iteratively updating its parameters (weights and biases) to minimize a loss function that measures the difference between the predicted output and the true output. TensorFlow provides a variety of optimization algorithms, such as stochastic gradient descent (SGD), Adam, and RMSprop, for minimizing the loss function. To train a neural network in TensorFlow, you need to define a loss function, choose an optimizer, and specify a training dataset consisting of input-output pairs. You can then use TensorFlow’s automatic differentiation capabilities to compute the gradients of the loss function with respect to the parameters and update the parameters using the optimizer.

- Evaluating Model Performance: After training a neural network, it’s important to evaluate its performance on a separate validation dataset to assess its generalization ability and identify potential overfitting. TensorFlow provides a range of metrics, such as accuracy, precision, recall, and F1 score, for evaluating the performance of classification models. For regression models, you can use metrics such as mean squared error (MSE) and mean absolute error (MAE) to measure the difference between predicted and true values. By monitoring these metrics during training, you can tune hyperparameters, adjust the model architecture, and implement regularization techniques to improve model performance.

- Fine-Tuning Pretrained Models: In many cases, it’s beneficial to leverage pretrained models, such as convolutional neural networks (CNNs) trained on large image datasets like ImageNet, to bootstrap the training of new models on similar tasks. TensorFlow provides a library of pretrained models, known as TensorFlow Hub, that you can easily import and fine-tune for specific applications. By freezing the weights of certain layers and training only the top layers of the model, you can adapt the pretrained model to new datasets with limited labeled data. Fine-tuning pretrained models is a common strategy for transfer learning in machine learning.

- Handling Data Input and Preprocessing: Effective data input and preprocessing are crucial for training neural networks that generalize well to unseen data. TensorFlow provides utilities for loading and preprocessing various types of data, including images, text, audio, and structured data. For image data, you can use the

tf.keras.preprocessing.imagemodule to load images from directories, resize them, apply data augmentation techniques, and convert them to tensors. For text data, you can use thetf.keras.preprocessing.textmodule to tokenize text, pad sequences, and convert words to embeddings. By preprocessing data effectively, you can reduce overfitting, improve model convergence, and enhance model performance. - Deploying Models in Production: Once you have trained and evaluated a neural network model, the next step is deploying it in production to make predictions on new data. TensorFlow provides several deployment options, including serving models as RESTful APIs using TensorFlow Serving, deploying models to mobile and embedded devices using TensorFlow Lite, and running models in web browsers using TensorFlow.js. By exporting trained models to standardized formats such as SavedModel or TensorFlow Lite format, you can deploy them to various platforms and integrate them into production systems seamlessly.

- Debugging and Troubleshooting: Debugging neural network models can be challenging due to the complexity of the models and the large volumes of data involved. TensorFlow provides several tools and techniques for debugging and troubleshooting models, including tensorboard, a visualization toolkit for analyzing model performance and debugging training processes. Additionally, you can use TensorFlow’s eager execution mode to interactively debug model code and inspect intermediate values during execution. By systematically diagnosing and addressing issues such as vanishing gradients, exploding gradients, and overfitting, you can improve the stability and reliability of your neural network models.

- Conclusion: In conclusion, TensorFlow provides a powerful and flexible platform for implementing neural networks and solving a wide range of machine learning tasks. By mastering the fundamentals of neural network implementation in TensorFlow, including defining network architectures, training models, evaluating performance, and deploying models in production, you can build sophisticated and scalable machine learning systems that deliver real-world value. Whether you’re building image classifiers, natural language processors, or recommendation systems, TensorFlow provides the tools and abstractions you need to turn your machine learning ideas into reality. So dive into the world of neural network implementation in TensorFlow, experiment with different architectures and techniques, and unlock the full potential of deep learning in your applications.